Inside Robot Dreams: What Google's DeepDream Bot Thinks About

Do androids dream of electric sheep? Actually, it's not far off.

Artificial neural networks have taken giant leaps in image classification. And yet experts actually know very little about how they work - or why they fail.

In order to visualize what's going on, three software engineers at Google decided to turn the DeepDream neural network on its head. Alexander Mordvintsev, Christopher Olah and Mike Tyka asked their artifical intelligence bot to enhance an image in such as way as to elicit a particular interpretation - say, a banana.

"Start with an image full of random noise," said the engineers, "then gradually tweak the image towards what the neural net considers a banana. By itself, that doesn’t work very well, but it does if we impose a prior constraint that the image should have similar statistics to natural images, such as neighboring pixels needing to be correlated."

Hey look! It generated a banana. At least, something that you or I can recognize as bananas.

Now here's the really wacky bit. Neural networks that were trained to discriminate between different kinds of images also develop the ability to generate images too.

As in, to think. To create. To "dream".

So how are these neural networks coming up with original images?

Like you or I, they need a source of inspiration. A memory. An idea.

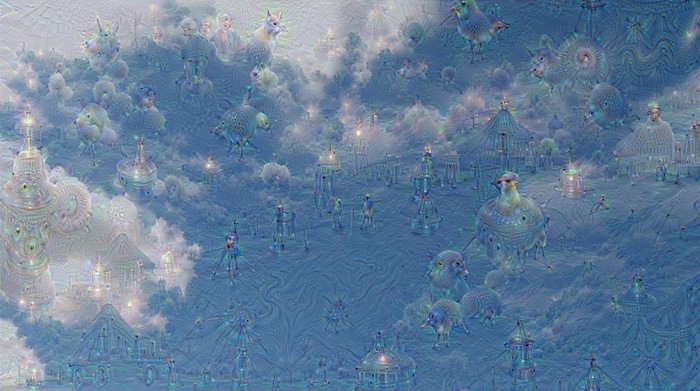

"We just start with an existing image [of sky] and give it to our neural net. We ask the network: 'Whatever you see there, I want more of it!'"

This creates a feedback loop: if a cloud looks a little bit like a bird, the network will make it look more like a bird. This in turn will make the network recognize the bird even more strongly on the next pass and so forth, until a highly detailed bird appears, seemingly out of nowhere.

"The results are intriguing - even a relatively simple neural network can be used to over-interpret an image, just like as children we enjoyed watching clouds and interpreting the random shapes."

"This network was trained mostly on images of animals, so naturally it tends to interpret shapes as animals. But because the data is stored at such a high abstraction, the results are an interesting remix of these learned features."

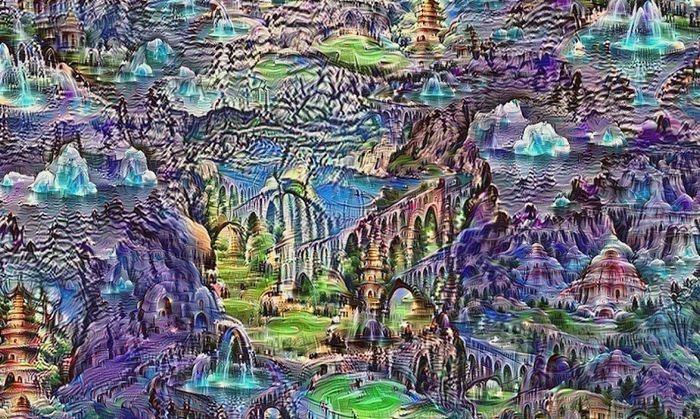

"This technique gives us a qualitative sense of the level of abstraction that a particular layer has achieved in its understanding of images. We call this technique Inceptionism in reference to the neural net architecture used."

See the team's Inceptionism gallery for more pairs of images and their processed results.

Neural Net Dreams

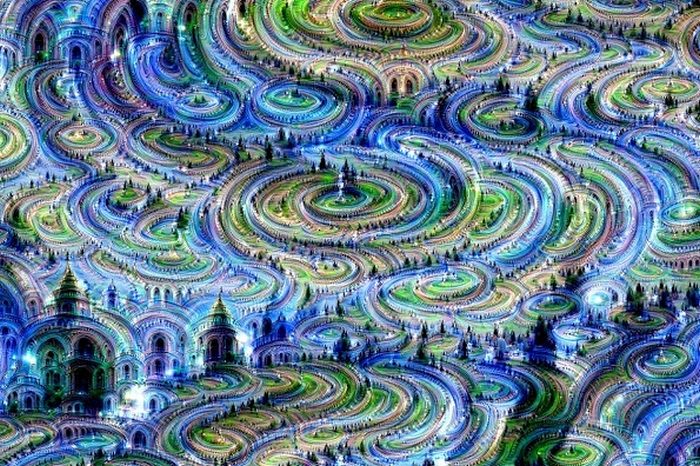

The coolest thing about this process is that the deeper you go, the more creativity you find.

The engineers found that if they apply the algorithm, over and over, on the same image, as well as zoom in on the most complex or interesting parts as they go, an endless stream of impressions emerge.

Take a look at these neural network "dreams" generated purely from random noise.

What do you these of these robot "dreams"? Impressed? Inspired? Perplexed?

Generate Your Own Images

Visit Google's online Deep Dream Generator to upload your own images and have them interpreted.

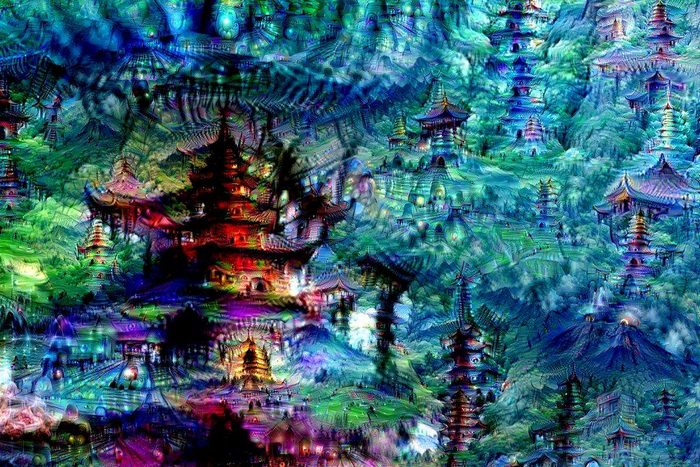

Here's what happened when I ran some of my lucid dream digital paintings through it:

Alien From The Deep - see original

Tell us what you think of neural network creativity over in our forums.